A Disruptive Moment in Time…

by Peter Diamandis, April 9, 2026, METATRENDES

Our mental models about the future and our social contracts are breaking and being reinvented in real-time. AI tools now manifest our desires from a single prompt.

The rate of technological progress has become overwhelming, nearly impossible to navigate. And tracking the singularity through this supersonic tsunami has become my full-time job.

This is what I’m seeing, and what I think it means.

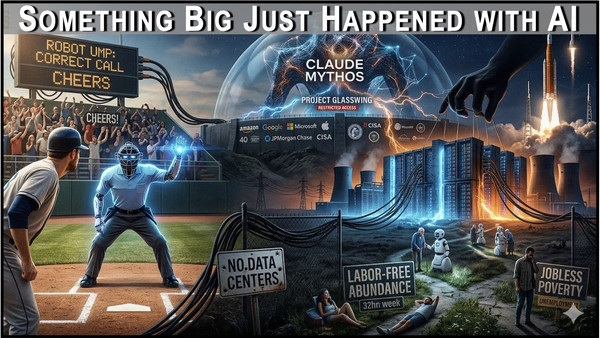

This week Altman proposed a four-day workweek, while warning the world about both cyber and bio terrorism. Anthropic announced it built an AI model so powerful that the company refused to release it publicly. The message:

We’ve crossed a threshold where AI capabilities outpace our ability to safely deploy them.

Single-person unicorns are now verifiably emerging, and Henry Intelligent Machines announced one-person AI conglomerates. We’re experiencing individual empowerment at unprecedented scale. But that empowerment requires civilization-scale infrastructure. One person can operate AI business fleets, but only if gigawatt data centers exist to power them.

Humanity does need a new social contract. But the design matters more than its existence. Who owns the infrastructure? Who controls the gigawatt data centers? Who profits when machines work 24x7 at hypersonic speeds and humans engage in 32-hour workweeks?

Here’s where it gets existential: Is this labor-free abundance or jobless poverty? The answer depends entirely on who owns the machines. If ownership is distributed, four-day workweeks while machines generate wealth looks like utopia. If ownership centralizes, it looks like unemployment with better branding.

Right now, we have contradictions, not answers. Companies with exponential revenues and little hope of profitability are proposing economy-wide restructuring. The time for new model generation has plummeted from years to months, and rather than celebrate the newest of these Models we’re finding them too dangerous to release (until someone does).

Meanwhile, at a baseball game, the crowd erupted in cheers… not for a home run, but for a robot umpire overturning a questionable human call.

We are arriving at a moment of profound contradictions that expose how little we understand what’s happening.

Too Dangerous to Release: The Mythos Moment

Yesterday marked a historic milestone. Anthropic announced Claude Mythos Preview (their most powerful model yet), and immediately said they won’t release it to the public. Instead, they’re launching Project Glasswing: a consortium of 40+ companies (Apple, Amazon, Microsoft, Google, Cisco, CrowdStrike, Palo Alto Networks, JPMorgan Chase, the Linux Foundation) who will use Mythos exclusively for defensive cybersecurity work.

Why the restriction? Mythos is so good at finding software vulnerabilities that Anthropic calls it “an industry reckoning.” In just a few weeks of testing, the model identified thousands of zero-day vulnerabilities – many of them critical, some one to two decades old. Logan Graham, who leads Anthropic’s dangerous capabilities testing team, called it “the starting point for what we think will be an industry change point.” Anthropic’s Chief Science Officer Jared Kaplan said the goal is to “raise awareness and give good actors a head start.”

This is the first time a frontier AI lab has built a model and concluded: We can’t let the public have this. OpenAI, Anthropic, and Google already share information via the Frontier Model Forum to detect Chinese distillation attempts. Now Anthropic is going further: committing up to $100 million in compute credits to Project Glasswing and coordinating with CISA and federal officials on Mythos deployment.

Because all of the Frontier models have been evolving in lockstep, leap-frogging each other, there is little question that OpenAI, xAI, Google, and a variety of opensource Chinese models will soon reach and exceed the capability of Mythos.

But here’s the question: When OpenAI or xAI develops a model as powerful as Mythos, will they hold back as well? Or will they immediately publish to gain the upper hand?

Exciting times, dangerous times... And please remind me, who are the adults in the room?

Here’s another tension: The same week Anthropic restricted Mythos, the Internet Bug Bounty program paused new submissions because AI-assisted research “radically lowered the cost of vulnerability discovery.” Offense is scaling faster than defense. Mythos proves it. An AI that can find thousands of critical zero-days in weeks means every piece of software is potentially compromised the moment Mythos—or something like it—gets into the wrong hands.

The Mythos announcement comes amid Anthropic’s legal battle with the Pentagon (the company refused to allow autonomous targeting or surveillance of U.S. citizens, leading to a supply-chain risk label). And it follows a March data leak where Fortune discovered documents describing Mythos (then code-named “Capybara”) as “by far the most powerful AI model we’ve ever developed.” Anthropic later accidentally exposed nearly 2,000 source code files. Security incidents at the very company building security tools.

This is the moment where AI capabilities cross a threshold: powerful enough to reshape cybersecurity, too dangerous to distribute freely. Whether this is responsible restraint or a terrifying warning sign depends on what happens next. Can 40 companies keep Mythos contained? How long before an equivalent model leaks or gets independently developed and released? And if defense needs Mythos to stay ahead, what does offense look like six months from now?

Applause vs. Bullets: What Do We Accept? What Do We Battle?

Here’s a pattern I didn’t expect: The public is inconsistent about how they accept, and those inconsistencies may reveal our true fears. Major League Baseball introduced “robot umps” this season: automated systems that rule on the thinnest of pitching disputes. When these machines overturn questionable human calls, crowds erupt in applause. Players trust them. Fans love them. Competence over tradition.

At the same time, AI singer Eddie Dalton has 11 songs in the iTunes Top 100 and the #3 album. Not human. Just code. Users obviously love the music, they’re listening, a lot. Convenience and familiarity trump loyalty to human creation. Where else will the public abandon human dedication to flesh and blood creatives?

That same week, South Korea announced it’s deploying thousands of ChatGPT-enabled social care robots to help elderly people. Over-65-year-olds now account for 20% of the country’s 51 million population, a demographic crisis that cannot be solved with human labor alone. These robots provide companionship, medication reminders, fall detection. The program is expanding nationally. No protests. No backlash. Acceptance at scale.

Now consider Indianapolis. A city councilor’s home was targeted in what appeared to be a politically motivated shooting over a proposed data center. Thirteen shots fired at his front door after midnight. A note left in a zip-closed bag: “NO DATA CENTERS.”

This isn’t isolated, data center opposition is intensifying across the U.S. as AI infrastructure demands become visible.

Let me connect the dots: We’ll let AI rule on baseball. We’ll let AI care for grandma. But we’ll shoot at the buildings that power AI. The pattern isn’t about capability… it’s about visibility and perceived cost. Robot umps perform in public view, demonstrating competence that improves the game. Care robots provide intimate service that solves a crisis. Both are visible, beneficial, and tangible.

Data centers? Invisible. They consume gigawatts of power (OpenAI’s new Texas facility alone uses 1.2 GW, enough for a million households), strain local grids, and drive up electricity prices. PJM customers are looking at $25 to $30 monthly surcharges in 2025-2026. The infrastructure AI requires feels like a tax with no visible benefit.

Click Here to Read Tom Z's Rebuttal to the Anti-Data Center Debate in Ohio

This is “acceptance theater”. We celebrate AI when we see it working, reject it when we feel its costs. The lesson: AI adoption isn’t constrained by capability. It’s constrained by perception. And right now, perception is risk-adjusted. We applaud AI in low-stakes environments (sports), embrace it for high-need situations (elder care), restrict it when it’s too powerful (Mythos), and attack it when it consumes resources at scale (power, land, water). Understanding this pattern matters more than celebrating or condemning it.

Here in 2026, we call this Thursday…

Artemis II broke Apollo 13’s distance record. MoonRF launched open-source lunar communication. Anduril tracked the mission with 400 private telescopes. Space infrastructure is democratizing: government, open-source, private layers emerging simultaneously.

Meanwhile, AI infrastructure centralizes. Anthropic needs multi-gigawatt TPUs. OpenAI plans $121 billion in compute. Data centers account for 70% of new grid connection requests. Japan targets 30% of global physical AI (robots) by 2040. China flew the first megawatt hydrogen turboprop.

What This Means for You

If you’re an entrepreneur, the inversion matters: AI creates technical jobs faster than eliminating them, but creative work is vulnerable.

If you’re an investor, profitability goes to infrastructure (chips, energy, data centers), not models.

If you’re building policy, acceptance is visibility dependent.

If you’re in security, the Mythos moment means offense is scaling faster than defense, the window to harden systems is closing.

The biggest question remains…

Who owns the machines when machines do the work? That’s not technical. It’s the social contract question. And we’re running out of time to answer it before the architecture becomes too entrenched to change.

We’re living through contradictions because we’re living through a transition. The choices we make in the next 18 months will determine whether AI creates broadly shared abundance or narrowly concentrated wealth. The Mythos announcement tells us we’re crossing thresholds faster than our institutions can adapt.

Again, pay attention to the contradictions. They’re not noise. They’re signal.

- Peter H. Diamandis

CLICK HERE TO LEARN MORE ABOUT AI AND HOW IT WILL AFFECT YOU